In modern analytical laboratories, method validation is the foundation of reliable, defensible data. Whether you are developing assays for pharmaceuticals, environmental testing, food safety, or metabolomics research, your results are only as strong as the standards you use to measure them. Among the most critical tools in this process are mass spectrometry reference standards, which provide accuracy, reproducibility, and confidence at every stage of method development and validation.

This article explores how mass spectrometry reference standards support method validation, why they are indispensable in regulated and research environments, and how laboratories can use them strategically to meet quality and compliance requirements.

Understanding Method Validation in Mass Spectrometry

Method validation is the documented process of proving that an analytical method is suitable for its intended purpose. In mass spectrometry (MS), this means demonstrating that the method can reliably identify and quantify analytes across a defined range with acceptable precision and accuracy.

Key validation parameters typically include:

-

Accuracy

-

Precision (repeatability and reproducibility)

-

Specificity/selectivity

-

Linearity

-

Limit of detection (LOD)

-

Limit of quantitation (LOQ)

-

Robustness

-

Stability

Each of these parameters depends heavily on the quality of calibration materials and controls. This is where mass spectrometry reference standards play a central role.

What Are Mass Spectrometry Reference Standards?

Mass spectrometry reference standards are well-characterized compounds used as benchmarks to verify instrument performance and method accuracy. They may be:

-

Native (unlabeled) standards

-

Stable isotope–labeled standards

-

Complex mixtures designed to mimic real samples

These standards are produced under strict quality controls, with known purity, concentration, and structural identity. When incorporated into analytical workflows, mass spectrometry reference standards enable laboratories to compare measured values against known, traceable references.

Why Reference Standards Are Critical for Method Validation

1. Establishing Accuracy and Trueness

Accuracy refers to how close a measured value is to the true value. During validation, laboratories must demonstrate that their method produces results that align with known concentrations.

By analyzing mass spectrometry reference standards with certified concentrations, analysts can directly assess bias and recovery. Any deviation from expected values highlights systematic errors in sample preparation, chromatography, ionization, or data processing.

Without reference standards, “accuracy” becomes a theoretical concept rather than a measurable attribute.

2. Supporting Precision and Reproducibility

Precision measures the consistency of results under the same conditions (repeatability) and across different days, analysts, or instruments (reproducibility).

Using mass spectrometry reference standards allows laboratories to:

-

Run repeated injections of the same material

-

Track signal variability over time

-

Compare performance across instruments or sites

This consistency is essential for validating methods that will be used routinely or transferred between laboratories.

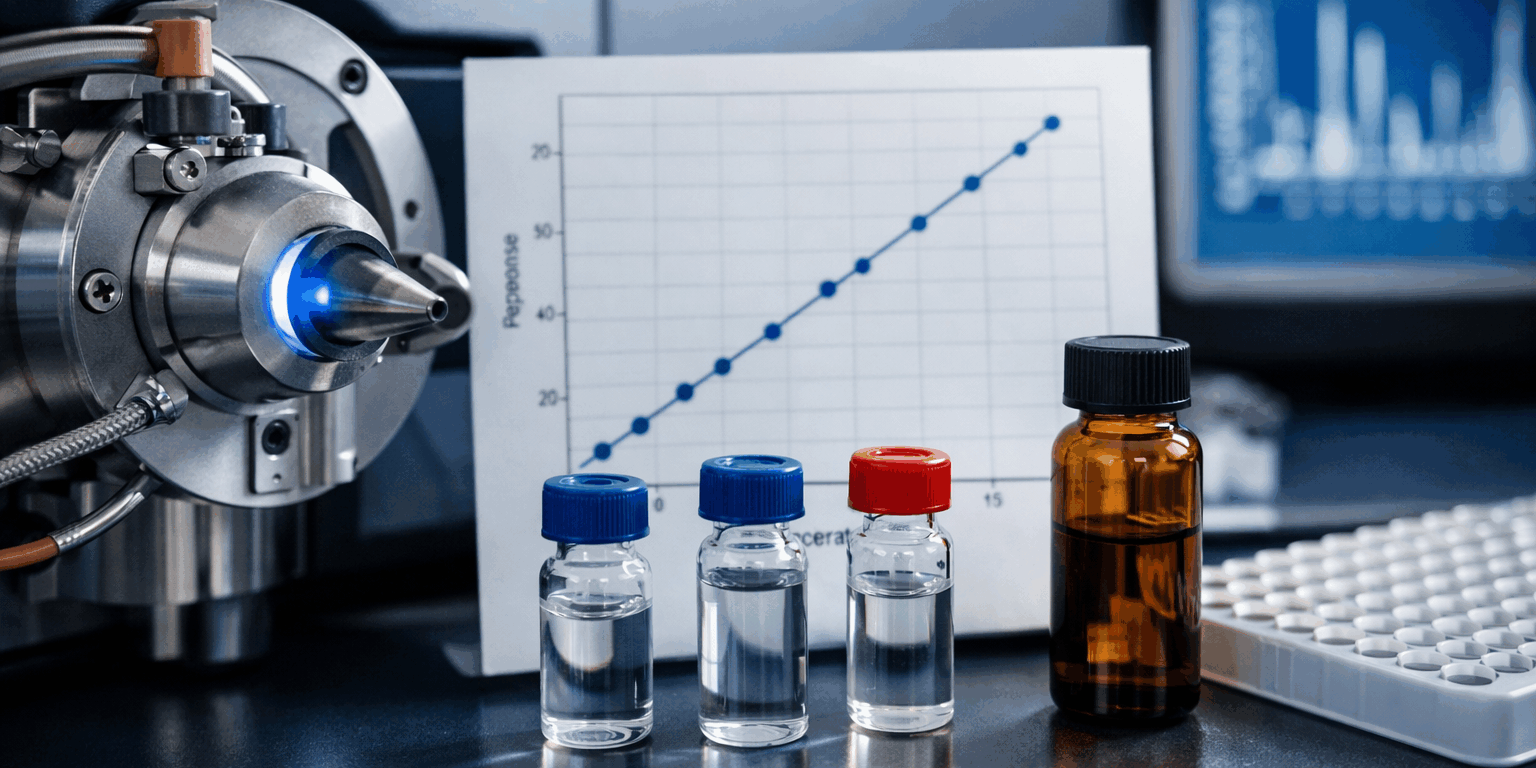

3. Verifying Linearity and Dynamic Range

Linearity demonstrates that an analytical response is proportional to analyte concentration over a specified range. Validation guidelines typically require multiple concentration levels analyzed in replicate.

Mass spectrometry reference standards enable accurate preparation of calibration curves by providing known concentrations across low, medium, and high levels. Because these standards are well-characterized, they reduce uncertainty in curve fitting and improve confidence in quantitative results.

4. Determining Limits of Detection and Quantitation

LOD and LOQ define the lowest concentration levels that can be reliably detected and quantified. These limits are critical in applications such as toxicology, environmental monitoring, and clinical research.

By diluting mass spectrometry reference standards to very low levels, laboratories can empirically determine detection thresholds rather than relying on theoretical estimates. This data-driven approach strengthens validation documentation and regulatory submissions.

5. Enhancing Specificity and Selectivity

Specificity ensures that the method measures the intended analyte without interference from other compounds. In complex matrices such as biological fluids or environmental samples—this can be particularly challenging.

Reference standards help confirm:

-

Correct retention times

-

Accurate mass values

-

Fragmentation patterns in MS/MS experiments

Comparing sample signals to mass spectrometry reference standards ensures that peaks are correctly identified, reducing the risk of false positives or misidentification.

The Role of Isotope-Labeled Reference Standards

Stable isotope–labeled standards are especially powerful in method validation. Because they behave almost identically to native analytes during sample preparation and analysis, they serve as internal controls for variability.

Benefits include:

-

Compensation for matrix effects

-

Correction for ion suppression or enhancement

-

Improved quantitative accuracy

In validation studies, isotope-labeled mass spectrometry reference standards help demonstrate robustness under real-world conditions, where sample complexity can otherwise compromise reliability.

Supporting Robustness and Ruggedness Testing

Robustness testing evaluates how small, deliberate changes affect method performance. Examples include slight variations in:

-

Mobile phase composition

-

Column temperature

-

Flow rate

-

Instrument settings

By analyzing mass spectrometry reference standards under these altered conditions, laboratories can assess whether results remain within acceptable limits. This evidence is critical when demonstrating that a method is fit for routine use.

Reference Standards and Regulatory Compliance

Regulatory agencies such as the FDA, EMA, and ISO bodies expect validated methods to be built on traceable, well-characterized standards. Proper use of mass spectrometry reference standards supports compliance with guidelines including:

-

ICH Q2(R1)

-

FDA bioanalytical method validation guidance

-

ISO/IEC 17025

In audits or inspections, documented use of reference standards provides clear proof that validation claims are supported by objective evidence.

For deeper insight into regulatory expectations, you can explore the FDA’s bioanalytical method validation guidance on the official FDA website: FDA bioanalytical method validation guidance

Best Practices for Using Mass Spectrometry Reference Standards

To maximize their value during method validation, laboratories should follow these best practices:

-

Use high-quality, certified materials

Select reference standards with documented purity, concentration, and stability. -

Store and handle standards correctly

Improper storage can degrade standards and invalidate results. -

Document everything

Record lot numbers, preparation steps, and expiration dates in validation reports. -

Re-verify periodically

Use reference standards to monitor ongoing method performance after validation.

By integrating mass spectrometry reference standards into both validation and routine analysis, laboratories maintain long-term data integrity.

How IROA Technologies Supports Reliable Validation

At IROA Technologies, reference standards are designed to address real-world analytical challenges. By combining rigorous manufacturing controls with innovative design, these solutions help laboratories achieve reliable validation outcomes while reducing uncertainty and rework.

The strategic use of mass spectrometry reference standards not only supports compliance but also enhances scientific confidence—ensuring that data stands up to scrutiny in research, clinical, and regulatory settings.

Frequently Asked Questions (FAQs)

1. Why are mass spectrometry reference standards essential for method validation?

Mass spectrometry reference standards provide known, traceable benchmarks that allow laboratories to measure accuracy, precision, linearity, and detection limits. Without them, validation results lack objective confirmation.

2. How often should reference standards be used after validation?

They should be used routinely to monitor method performance, instrument stability, and long-term reproducibility. Regular checks help identify drift or degradation early.

3. What is the difference between reference standards and internal standards?

Reference standards are typically used for calibration and validation, while internal standards (often isotope-labeled) are added to samples to correct for variability during analysis. Both play complementary roles.

4. Are isotope-labeled standards required for validation?

They are not always required, but they significantly improve accuracy and robustness, especially in complex matrices. Many regulated methods strongly recommend their use.

5. Can one reference standard be used across multiple methods?

In some cases, yes. However, standards should always be evaluated for suitability within the specific method, matrix, and concentration range being validated.

Final Thoughts

Method validation is not just a regulatory checkbox—it is a scientific commitment to data quality. By anchoring validation studies in high-quality mass spectrometry reference standards, laboratories build methods that are accurate, reproducible, and defensible.

From initial development to routine application, mass spectrometry reference standards remain a cornerstone of reliable analytical science, empowering laboratories to deliver results they—and their stakeholders—can trust.